You have heard about it for sure, it’s one of the hottest technologies of the moment and it’s gaining momentum quickly: the numbers illustrated at DockerConf 2017 are about 14 million Docker hosts, 900 thousands apps, 3300 project contributors, 170 thousands community members and 12 billion images downloaded.

In this series of articles we’d like to introduce the basic concepts in Docker, so to have solid basis before exploring the ample related ecosystem.

The Docker project was born as an internal dotCloud project, a PaaS company, and based on the LXC container runtime. It was introduced to the world in 2013 with an historic demo at PyCon, then released as an open-source project. The following year the support to LXC ceased as its development was slow and not at pace with Docker; Docker started to develop libcontainer (then runc), completely written in Go, with better performances and an improved security level and degree of isolation (between containers). Then it has been a crescendo of sponsorships, investments and general interest that elevated Docker to a de-facto standard.

It’s part of the Open Container Project Foundation, a foundation of the Linux Foundation that regulates the open standards of the container world and includes members like AT&T, AWS, DELL EMC, Cisco, IBM Intel and the likes.

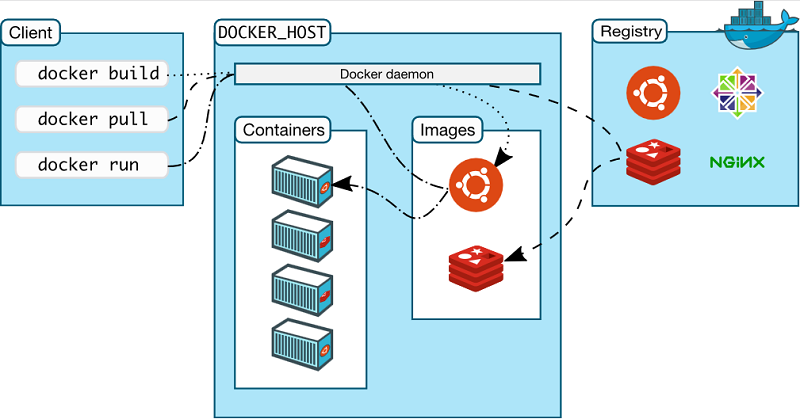

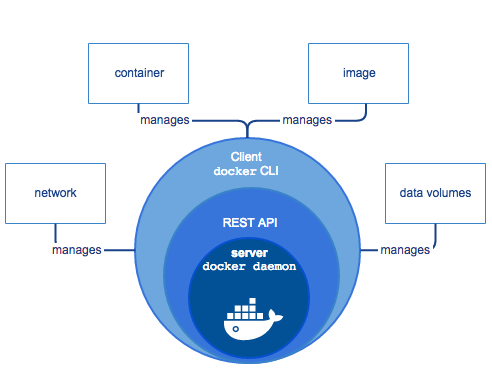

Docker is based on a client-server architecture; the client communicates with the dockerd daemon which generates, runs and distributes containers. They can run on the same host or on different systems, in this case the client communicates with the daemon by means of REST APIs, Unix socket or network interface. A registry contains images; Docker Hub is a public Cloud registry, Docker Registry is a private, on-premises registry.

Docker Engine is the client-server application that manages these components.

Container vs VM

Docker is about container, a virtualization concept whose roots lay back in time (the first implementation is chroot in Unix, 1983), but it’s only recently that it gained a solid development and momentum, and a number of implementations of the technology guaranteed its success.

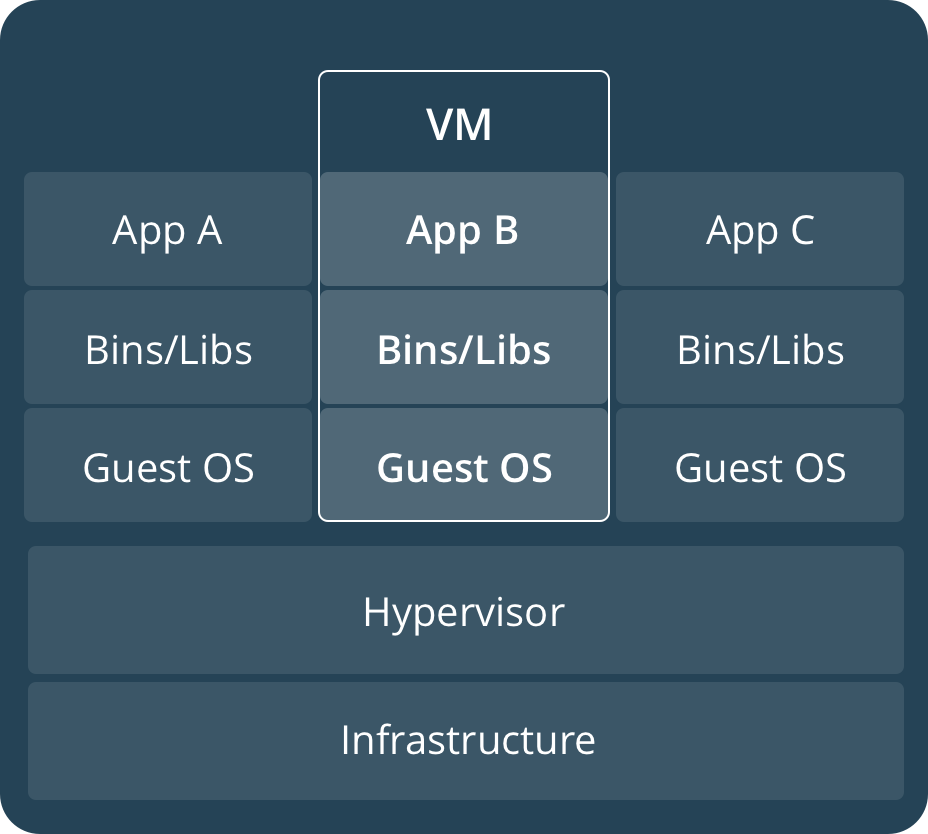

Containers (CT) and virtual machines (VM) are conceptually different, yet the user experience is almost the same in several circumstances.

A VM recreates a whole operating system, including kernel emulation and user-space. The use of computational resources is intensive and the running state is the result of a hard-to-replicate combination of dependencies, system states and runtimes.

On the other hand, a CT emulates just the user-space but not the kernel, for which is used the one of the underlying hypervisor. Consequently, and strictly speaking, containers can be only Linux and not Windows, macOS or *BSD (which has as a similar virtualization paradigm) like in a virtual machine. Each CT just has code, dependencies and settings needed to run the application they are deployed for, the kernel is shared with the underlying operating system.

The advantages of this paradigm are essentially two. The first one is about the computational needed for virtualizing: a CT needs enough to recreate the user-space, thus resulting in a lesser overhead that kernel-complete emulation. Therefore the size of a CT is contained too, as kernel, apps and libraries that are provided by a complete operating system (as in a VM) and are not actually used, are not present. The second advantage is about compatibility and portability of container, which is exemplified by the “Build, Ship and Run Any App, Anywhere” motto.

In facts, the images containers are built from need just Docker to be used, and as Docker is available for several operating systems and architecture, it follows that containers are an host-agnostic technology. So code can be written on Windows and then run on Linux thanks to the portability that is guaranteed by Docker; and as the actual state of the container can be replicated quite easily, compatibility issues disappear too.

All in all, containers are not just “virtual machines that boot up quicker”, they open the doors to the micro-services paradigm, which is a deployment concept where monolith services are substituted by several atomic services, micro-services indeed.

Docker encompasses this idea providing, let’s say, an instance for the web server, an instance for the database and an instance for the scripting language instead of a single, big LAMP stack virtual machine.

The advantages brought by micro-services are plenty and require a complete, separate article; however this paradigm is not the winning one in all cases: each one must be carefully pondered and evaluated.

Installation requirements and supported systems

Docker is available in two editions: the Community Edition (CE) and the Enterprise Edition (EE). The former is free, the latter is paid and has two update channels (Stable, with quarterly releases, and Edge, with monthly releases). The EE is thought for business-critical contexts. It includes advanced capabilities such as advanced management of containers and images, certified ecosystem and images safety scans.

Supported operating systems include macOS, Windows 10 and Windows Server 2016 and Linux (several distros including CentOS, RHEL, Suse Ubuntu and Debian); supported architectures include x86_64, ARM, ARM64 and IBM Z. The processor must have a virtualization technology enabled.

Check the official documentation for any OS and platform specific installation instruction. Note that on Windows 7, 8 and 8.1 Docker has to be installed with Docker Toolbox and not with the typical installation procedure.

Docker Cloud allows the installation on partner Cloud environments like Amazon Web Services, Microsoft Azure and DigitalOcean.

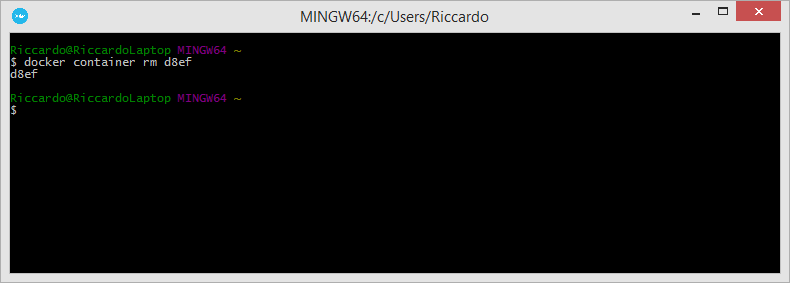

Docker is used via command line preferably or with a GUI like Portainer or Rancher. Orchestration tools like Docker Swarm and Kubernetes will be treated in another article.